Homoscedasticity is a situation when the error term is the same for all the values of independent variables. So, the model assumes either little or no multicollinearity between the features or independent variables. Or we can say, it is difficult to determine which predictor variable is affecting the target variable and which is not. Due to multicollinearity, it may difficult to find the true relationship between the predictors and target variables.

Multicollinearity means high-correlation between the independent variables. Small or no multicollinearity between the features:.Linear regression assumes the linear relationship between the dependent and independent variables. Linear relationship between the features and target:.These are some formal checks while building a Linear Regression model, which ensures to get the best possible result from the given dataset. It can be calculated from the below formula:īelow are some important assumptions of Linear Regression.It is also called a coefficient of determination, or coefficient of multiple determination for multiple regression.The high value of R-square determines the less difference between the predicted values and actual values and hence represents a good model.It measures the strength of the relationship between the dependent and independent variables on a scale of 0-100%.R-squared is a statistical method that determines the goodness of fit.The process of finding the best model out of various models is called optimization. The Goodness of fit determines how the line of regression fits the set of observations. It is done by a random selection of values of coefficient and then iteratively update the values to reach the minimum cost function.A regression model uses gradient descent to update the coefficients of the line by reducing the cost function.Gradient descent is used to minimize the MSE by calculating the gradient of the cost function.If the scatter points are close to the regression line, then the residual will be small and hence the cost function. If the observed points are far from the regression line, then the residual will be high, and so cost function will high. Residuals: The distance between the actual value and predicted values is called residual. It can be written as:įor the above linear equation, MSE can be calculated as: This mapping function is also known as Hypothesis function.įor Linear Regression, we use the Mean Squared Error (MSE) cost function, which is the average of squared error occurred between the predicted values and actual values. We can use the cost function to find the accuracy of the mapping function, which maps the input variable to the output variable.

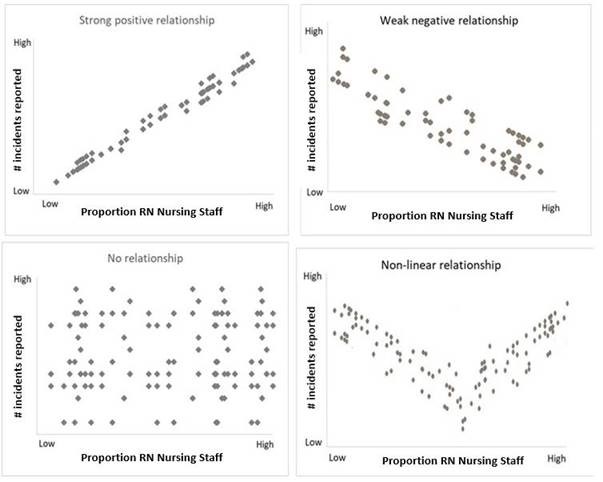

It measures how a linear regression model is performing. Cost function optimizes the regression coefficients or weights.The different values for weights or coefficient of lines (a 0, a 1) gives the different line of regression, and the cost function is used to estimate the values of the coefficient for the best fit line.The different values for weights or the coefficient of lines (a 0, a 1) gives a different line of regression, so we need to calculate the best values for a 0 and a 1 to find the best fit line, so to calculate this we use cost function. The best fit line will have the least error. When working with linear regression, our main goal is to find the best fit line that means the error between predicted values and actual values should be minimized. If the dependent variable decreases on the Y-axis and independent variable increases on the X-axis, then such a relationship is called a negative linear relationship. If the dependent variable increases on the Y-axis and independent variable increases on X-axis, then such a relationship is termed as a Positive linear relationship.

A regression line can show two types of relationship: If more than one independent variable is used to predict the value of a numerical dependent variable, then such a Linear Regression algorithm is called Multiple Linear Regression.Ī linear line showing the relationship between the dependent and independent variables is called a regression line. If a single independent variable is used to predict the value of a numerical dependent variable, then such a Linear Regression algorithm is called Simple Linear Regression. Linear regression can be further divided into two types of the algorithm: The values for x and y variables are training datasets for Linear Regression model representation. X= Independent Variable (predictor Variable)Ī0= intercept of the line (Gives an additional degree of freedom)Ī1 = Linear regression coefficient (scale factor to each input value).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed